How to Get Rid of Software Glitches: A Practical Troubleshooting Guide

A practical, step-by-step guide to diagnosing, fixing, and preventing software glitches across apps and systems. Learn reproducible testing, targeted fixes, and robust validation to improve reliability.

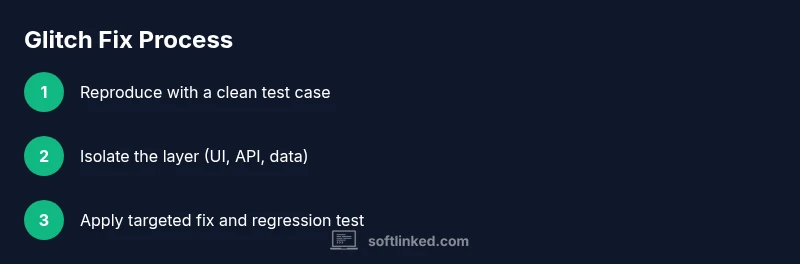

How to get rid of software glitches: a practical, step-by-step approach to diagnose, isolate, and fix issues across your operating system, apps, and code. By following stable debugging practices, you can reduce recurring bugs and downtime. According to SoftLinked, start with a reproducible scenario, collect error data, and apply targeted fixes, then verify with thorough testing.

What Causes Software Glitches

Software glitches arise from a mix of predictable and unforeseen factors. Common culprits include race conditions in concurrent code, memory leaks that slowly degrade performance, dependency version mismatches, and edge-case inputs that surface after a user action sequence. Environmental factors like OS updates, driver issues, and subtle network timing problems can also trigger glitches. Understanding these root causes helps you choose the right diagnostic tools and avoid chasing symptoms instead of the actual problem. In practice, teams that log reproducible scenarios and organize error data consistently see faster root-cause analysis. The SoftLinked team emphasizes that a structured approach reduces firefighting and shortens repair cycles. By collecting context (versions, hardware, steps to reproduce) you create a reliable foundation for fixes and prevention.

A Systematic Diagnosis Framework

A disciplined diagnosis framework turns messy bug reports into actionable hypotheses. Start with observations (what happened?), move to data collection (logs, metrics, traces), form a hypothesis (where could it be happening?), and test it with targeted experiments. Use a lightweight, repeatable playbook that any team member can run. This framework helps separate flaky failures from persistent glitches and supports safer rollbacks if a fix backfires. The SoftLinked analysis shows that teams with a formal test plan and clear ownership cut mean time to repair by a meaningful margin. Incorporate automated checks where possible, but don’t rely solely on automation—human judgment remains essential for ambiguous failures.

Reproduce the Bug with a Clear Test Case

A reliable bug report begins with a precise reproduction. Include the exact steps, input data, environment details (OS version, app version, device model), and the observed outcome. Capture screenshots or screen recordings when possible, and record any error messages or stack traces. Reproducing the issue under a controlled environment (e.g., a dedicated VM or container) reduces noise and ensures you’re testing the same scenario each time. Maintain a running log of attempts, noting what changes (or doesn’t) affect the result. Well-documented reproduction is the difference between a slow chase and a fast fix, and it aligns teams around a shared understanding of the problem.

Isolate the Root Cause Across Layers

Glitches rarely reside in a single place. Isolate by layer: UI, frontend logic, API/back-end services, data storage, and infrastructure. Use divide-and-conquer tests to rule out layers you’re not addressing. For example, disable client-side logic to see if the issue persists, then test API responses independently. Mapping symptoms to potential layers helps you prioritize fixes and minimizes risk. When you find a likely culprit, implement a small, testable change and watch for regression in related flows. The goal is to avoid sweeping rewrites and instead apply focused, verifiable changes.

Apply Fixes by Layer: UI, Logic, Data

Apply targeted fixes rather than broad rewrites. Start with the smallest change that could plausibly resolve the glitch, and ensure you have a regression test that would have caught the issue previously. If a fix touches multiple layers, implement it in stages and validate each stage independently. Document the rationale behind the change and how it reduces the risk of new glitches. After implementing the fix, run the full suite of tests, plus targeted exploratory tests that mimic real user behavior. This discipline reduces the chance of introducing new bugs while fixing the original one.

Verification, Regression Testing, and Rollout

Verification should confirm that the glitch no longer occurs and that no previously working functionality is broken. Run regression tests across supported environments, including different OS versions and hardware if relevant. Automate as much as possible, but complement with manual exploratory testing for non-deterministic glitches. Prepare a rollback plan in case the fix causes unintended side effects. When confident, deploy to production with feature flags or a staged rollout to monitor user impact and capture any new signals that might suggest a residual issue.

Environments and Common Fixes by Context

Different environments demand different remedial patterns. Desktop applications may benefit from memory profiling and UI event debouncing, while web apps often need robust input validation, idempotent API calls, and proper error handling. Mobile apps frequently require careful lifecycle management and offline data handling. In many cases, updating dependencies, clearing caches, or reconfiguring build settings resolves issues quickly. The key is to tailor fixes to the context, validate in representative scenarios, and avoid assuming that a single universal patch fits all cases.

Prevention: Monitoring, Logging, and Maintenance

Prevention relies on proactive monitoring, thorough logging, and disciplined maintenance. Instrument critical code paths with structured logs and performance traces so you can detect anomalies early. Set up alerting thresholds for error rates, latency, and resource usage to catch glitches before users notice them. Regularly refresh test data, review flaky tests, and keep dependencies up to date in a controlled manner. Build a knowledge base of known issues and fixes, so future glitches can be diagnosed faster. Over time, this practice reduces user impact and sustains software reliability.

Authority and Further Reading

For deeper guidance on debugging practices and reliability, consult credible sources:

- https://www.nist.gov/publications

- https://www.cisa.gov/

- https://cacm.acm.org/

These references provide foundational principles for rigorous engineering, reproducible testing, and dependable software maintenance.

Tools & Materials

- Bug reporting and project management tool(Ensure it captures reproduction steps, environment, logs, and screenshots)

- Reproduction environment (VM/container)(A clean, controlled setup to reproduce reliably)

- Error logs and diagnostic tools(Collect stack traces, metrics, traces, and console outputs)

- Version control access(Track fixes with clear branches and commits)

- Test data set and test harness(Include representative inputs, edge cases, and regression tests)

- Screen capture or recording tools(Helpful for documenting reproducible steps)

- Backup/rollback plan(Have a safe point to revert if a fix introduces issues)

Steps

Estimated time: 45-90 minutes

- 1

Reproduce the Bug Precisely

Create a minimal, repeatable sequence of actions that triggers the glitch. Include OS/app versions, hardware details, and any relevant inputs. Document observed results and capture screenshots.

Tip: Use a clean environment and a fixed test account to avoid extraneous factors. - 2

Collect Error Data and Logs

Gather stack traces, log files, performance metrics, and network traces. Correlate timestamps with the reproduction steps to find causality.

Tip: Centralize data in a single view to compare across attempts quickly. - 3

Form a Hypothesis Based on Evidence

Based on collected data, propose plausible root causes. Prioritize hypotheses by impact and likelihood, and plan targeted tests to validate each one.

Tip: Limit hypotheses to testable, isolated changes to reduce noise. - 4

Test the Hypothesis with Targeted Experiments

Design small experiments to confirm or refute each hypothesis. Change one variable at a time and observe outcomes.

Tip: Document results and any unexpected side effects for future reference. - 5

Implement a Targeted Fix

Apply the smallest, most verifiable change that addresses the root cause. Add or adjust regression tests to prevent relapse.

Tip: Review changes with peers to catch edge cases before merge. - 6

Verify Thoroughly and Regress

Run the full test suite plus focused tests around the fix. Validate across supported environments and user scenarios.

Tip: Use feature flags or staged rollout to monitor impact. - 7

Document the Fix and Update KB

Record the root cause, the fix, test results, and any user-facing notes. Update knowledge base articles for future reference.

Tip: Make the documentation actionable with exact steps to reproduce and verify. - 8

Monitor Post-Release

Track key metrics after deployment to confirm the issue remains resolved and to catch any new related glitches early.

Tip: Set alert thresholds high enough to catch regression without overwhelming noise.

Your Questions Answered

What should I do first when a glitch is reported?

Begin by reproducing the glitch in a controlled environment and collecting contextual data. This establishes a solid foundation for diagnosing root causes.

First, reproduce the glitch in a controlled setup and gather context so you can diagnose accurately.

How can I tell if the issue is frontend or backend?

Isolate by disabling client-side logic to see if the problem persists. If it does, focus on server APIs or data storage. Cross-check with logs from both sides to confirm the root layer.

Disable client logic to test frontend separately, then inspect server logs to identify backend causes.

Is it ever better to rewrite code to fix a glitch?

Rewrites should be a last resort. Prefer small, testable fixes that address the root cause and add regression tests to prevent recurrence.

Only rewrite if a small fix isn’t feasible; otherwise, target minimal, testable changes.

What if the glitch occurs only on a specific device or config?

Focus on environmental factors first: drivers, OS version, and hardware differences. Create device-specific test cases and verify across similar setups.

Check for environment-specific factors first, then test on similar devices to confirm.

Can software glitches be prevented entirely?

No single fix prevents all glitches, but a combination of good testing, monitoring, and disciplined release practices significantly reduces their occurrence and impact.

Glitches can be minimized, though not eliminated, through solid testing and monitoring.

How long should regression tests take after a fix?

Regression testing time depends on scope, but aim for completing essential paths within a few hours and automating what you can for future fixes.

Plan for a few hours of regression testing, prioritizing automation where possible.

Watch Video

Top Takeaways

- Reproduce accurately with complete context

- Isolate by layer to minimize scope

- Apply targeted, testable fixes

- Validate thoroughly and monitor after release