What to Do in Software Testing: A Practical Guide

Learn practical, step-by-step methods for software testing, from planning and design to execution, automation, and reporting. This educational guide helps aspiring testers build solid fundamentals and deliver reliable software.

What you will do: you’ll plan a testing strategy, design test cases, execute them across unit, integration, and system levels, and continuously verify quality while reporting defects and refining your approach. This guide outlines a practical, step-by-step workflow, key techniques, and common pitfalls to help aspiring testers build solid fundamentals and deliver reliable software.

Foundations of software testing

According to SoftLinked, effective testing begins with clear objectives and a risk-based mindset. In practice, this means defining what success looks like before you write a single test. At its heart, software testing is about reducing uncertainty: validating that the software behaves as intended, under realistic conditions, and within acceptable limits of time, cost, and risk.

- The purpose of testing is not to prove there are no bugs, but to reveal where they exist and why they matter.

- Good testers collaborate with developers, product managers, and users to align quality goals with user needs.

In this section you will learn how testing fits into the broader software development lifecycle, why planning matters, and how to balance depth and breadth across tests. You will also see how risk assessment guides where to invest effort and which tests are most likely to find meaningful defects.

Key ideas:

- Define measurable quality objectives (e.g., reliability, performance, security)

- Prioritize tests around high-risk areas and critical workflows

- Use traceability to connect tests to requirements and user stories

The goal is to translate high-level quality aspirations into concrete testing activities, data requirements, and environmental needs that you can reuse across projects, teams, and cadences.

The Testing Lifecycle

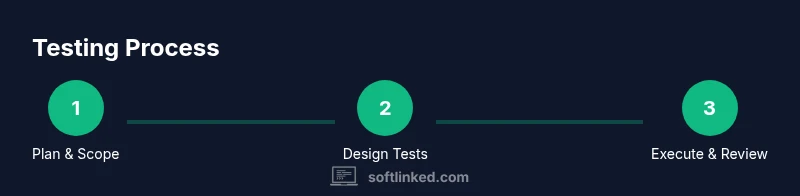

A structured testing lifecycle helps teams deliver consistent quality. Most teams follow a loop of plan, design, execute, report, and learn. Each phase should be distinct but tightly integrated with the code, the product roadmap, and the release schedule.

- Plan: establish objectives, scope, acceptance criteria, and risk-based testing priorities.

- Design: craft test cases, data sets, and environment requirements that cover both typical usage and edge cases.

- Execute: run tests, capture results, log defects, and monitor progress against milestones.

- Report: communicate status to stakeholders with clear, concise defect summaries and risk assessments.

- Learn: reflect on what worked, what didn’t, and how to improve the next cycle.

A successful lifecycle uses lightweight artifacts and automation where helpful, without slowing down delivery. The SoftLinked team emphasizes frequent feedback loops and early validation to minimize late-stage surprises.

Testing Levels and Types

Effective testing covers multiple levels to validate different system aspects.

- Unit testing checks individual components in isolation, typically automated and fast.

- Integration testing validates how components work together, catching interface problems early.

- System testing exercises the entire application in an end-to-end scenario.

- Acceptance testing verifies readiness from a user and business perspective.

Beyond levels, testers employ various types to stress different qualities:

- Regression testing ensures previously working behavior remains after changes.

- Performance testing measures responsiveness under load.

- Security testing checks for vulnerabilities and insecure configurations.

- Exploratory testing relies on human intuition to discover defects not captured by scripts.

An optimal strategy blends automation with human insight, balancing repeatability with creativity and domain understanding.

Designing Tests: Techniques and Patterns

Good test design uses repeatable techniques that translate requirements into verifiable conditions.

- Boundary value analysis tests inputs at the edges of valid ranges.

- Equivalence partitioning groups inputs into representative classes to reduce test count.

- Pairwise testing aims for broad coverage with fewer combinations.

- Decision table testing captures complex business rules in compact matrices.

- State transition testing validates behavior across different states and events.

Use acceptance criteria from product owners to decide which scenarios matter most. Document expected results precisely so defects point to the right cause. For maintainability, prefer data-driven tests and reusable test steps rather than one-off scripts.

Building a Practical Testing Plan

A concrete plan keeps testing focused and measurable.

- Scope and risks: identify what to test, what to skip, and where defects would be most costly.

- Test objectives: state what quality attributes you aim to validate.

- Test design: select techniques, data sets, and environments.

- Environment and data: prepare test rigs, mocks, and test data with consent and privacy in mind.

- Schedule and milestones: align with the development cadence.

- Metrics and reporting: decide which metrics to track and how to present them.

Executing this plan requires clear ownership, transparent communication, and a reliable pipeline for builds, test execution, and defect triage. Automate where it saves time, but preserve manual testing for exploratory work and UX validation.

Automation vs. Manual Testing

Automation excels at repeatability, speed, and coverage, especially for regression checks. Manual testing shines where human judgment, intuition, and usability matter.

- Start with a small automation strategy focused on high-value scenarios.

- Choose a framework that fits your stack and team skills.

- Invest in reliable test data management and deterministic environments.

- Combine scripted tests with exploratory sprints to discover edge cases.

A pragmatic approach blends both modes, using automation to free testers for more valuable analysis and creative investigation. Regularly review automated tests for flakiness and maintainability.

Measuring Testing Success: Metrics and Reporting

Quantitative metrics help teams assess testing progress and quality. Common measures include:

- Defect density: defects per thousand lines of code.

- Test coverage: percentage of requirements or code exercised by tests.

- Pass rate and failure rate: proportion of tests that pass or fail.

- Mean time to detect (MTTD) and mean time to repair (MTTR): speed of defect handling.

- Test execution time and automation throughput: how quickly tests run.

Note that metrics are only useful when interpreted in context. Pair metrics with qualitative insights from stakeholders and user feedback. The SoftLinked team's guidance is to align reporting with decision-making needs rather than chasing vanity numbers.

Real-World Workflow: A Sample Scenario

Imagine you join a mid-size project building a web-based dashboard. Start by defining acceptance criteria with product, map those to test objectives, and design a mix of unit, integration, and end-to-end tests. As development progresses, continuously run automated suites, log defects with clear steps and expected results, and perform exploratory testing around new features.

In this scenario, a small find about an API timeout leads to a targeted regression test and a quick fix. The lesson: maintain a living test plan, keep data sets realistic, and ensure your environment mirrors production as closely as possible. According to SoftLinked analysis, teams that iterate their tests alongside development reduce late-stage defects by a meaningful margin.

Tools & Materials

- Testing environment and data(Virtual machines or containers, realistic datasets with privacy safeguards)

- Test management tool(Jira, qTest, or similar for traceability)

- Defect tracking system(Clear fields for steps to reproduce, expected vs actual, severity)

- Automation framework(Selenium/Playwright for web tests, Appium for mobile)

- CI/CD integration(Triggers test suites on each build; supports parallel runs)

- Sample data generator(Seed data for repeatable tests)

- Monitoring and logging tools(APM, logs, and dashboards to verify performance under test)

Steps

Estimated time: 4-6 weeks

- 1

Define objectives and scope

Clarify what quality attributes matter (e.g., reliability, usability) and which areas require testing. Document acceptance criteria and risk-based priorities to guide every subsequent step.

Tip: Tie tests to user stories or requirements to avoid scope creep. - 2

Choose testing levels and techniques

Select unit, integration, system, and acceptance tests; decide on techniques like boundary value analysis, equivalence partitioning, and exploratory testing.

Tip: Balance automated tests for repeatability with manual exploration for discovery. - 3

Design test cases and data

Create repeatable, data-driven test cases with clear inputs and expected outcomes. Include negative scenarios and edge cases.

Tip: Reuse test steps and data across similar features to reduce maintenance. - 4

Prepare environments and data

Set up production-like environments, ensure data privacy, and configure monitoring to observe tests in action.

Tip: Isolate test data from production data to prevent leaks. - 5

Implement and execute tests

Develop automated tests for high-value flows; run manual tests for usability and edge conditions; log defects with reproducible steps.

Tip: Run smoke tests first to validate the build before deeper testing. - 6

Analyze results and report

Aggregate results, prioritize defects, and communicate clear risk assessments to stakeholders.

Tip: Provide both quantitative metrics and qualitative context to aid decisions. - 7

Iterate and improve

Update tests based on feedback, deprecate flaky tests, and adjust the plan for the next cycle.

Tip: Treat testing as a living practice that evolves with the product.

Your Questions Answered

What is the main goal of software testing?

The main goal is to discover defects and verify that the software meets its requirements and user needs. Testing helps reduce risk by providing evidence about quality and readiness for release.

The main goal is to find defects and verify requirements so you can reduce risk before release.

Which testing level should I start with as a beginner?

Begin with unit testing to validate individual components, then progressively add integration and system tests. Acceptance testing should follow once the product meets basic quality thresholds.

Start with unit tests, then build up to integration and system tests, followed by acceptance testing.

How do I decide between manual and automated testing?

Automate repetitive, high-risk, and data-heavy scenarios to save time. Reserve manual testing for exploration, UX feedback, and complex paths where human judgment adds value.

Automate repetitive checks and use humans for exploration and UX insights.

What metrics should I track for testing?

Track defect density, test coverage, pass/fail rates, and MTTR/MTTD. Contextualize metrics with product goals and stakeholder feedback.

Keep metrics like defect density and coverage, but always add context for decisions.

How can I maintain test data responsibly?

Use synthetic or anonymized data, document data lineage, and ensure privacy controls. Regularly refresh data to reflect real usage patterns.

Protect privacy by using anonymized data and keep data refreshed to reflect real use.

What should a good test plan include?

Objectives, scope, risk-based priorities, test design strategies, environment setup, data plans, schedule, and defined metrics.

A solid plan covers objectives, scope, design, environment, data, schedule, and metrics.

Watch Video

Top Takeaways

- Define clear quality objectives before testing.

- Balance automation with human insight for coverage.

- Design reusable, data-driven tests.

- Iterate tests alongside development for faster feedback.