Software and Technology: A Comprehensive Comparison for Developers

A rigorous, objective comparison of software and technology, examining fundamentals, modern stacks, architecture, and practical decision criteria to help developers choose tools and approaches.

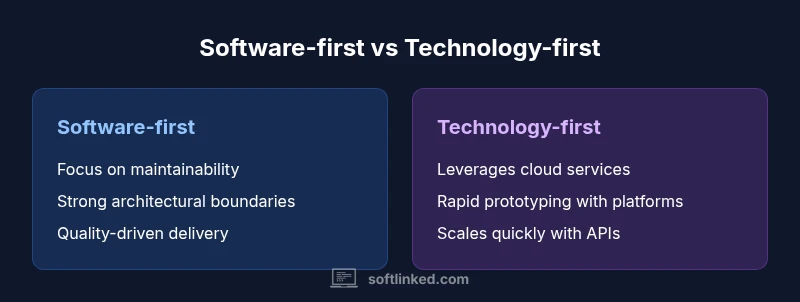

In the realm of software and technology, the biggest decision often comes down to choosing between a software-centric strategy and a technology-driven platform approach. A balanced path combines solid software fundamentals with adaptable tech stacks, enabling maintainability, scalability, and faster iteration. For aspiring engineers, understanding how software quality intersects with evolving technology helps you select tools, architectures, and practices that scale with real-world demands.

What software and technology really mean in practice

The phrase software and technology encompasses both the code that runs systems and the broader set of tools, platforms, and practices that enable those systems to operate reliably at scale. When you study this domain, you should track two intertwined threads: software fundamentals (data structures, algorithms, testing, maintainability) and technology-enablement (cloud platforms, AI capabilities, microservices, APIs). The keyword here is balance: strong fundamentals prevent brittle systems, while modern technology stacks accelerate delivery and enable new capabilities. For developers, the goal is to build capabilities that endure as technologies evolve, not just to chase the latest toolkit. In SoftLinked’s view, durable software and technology outcomes hinge on disciplined design and deliberate adoption of new tech.

Key takeaway: Treat software and technology as two sides of the same coin, each reinforcing the other to deliver robust, scalable solutions.

The core building blocks: software fundamentals and where tech fits

At the heart of any durable software system are fundamentals that never go out of style: clear interfaces, modular design, and well-tested components. Data structures and algorithms determine performance, while architecture patterns such as layered, hexagonal, or clean architecture guide maintainability. Version control, continuous integration, and automated testing reduce regression risk and support rapid iteration. On the technology side, modern stacks bring capabilities like cloud-native deployment, containerization, and service meshes that enable reliability at scale. The challenge is to integrate these tools without creating unnecessary complexity. A strong foundation in software engineering makes it easier to swap or upgrade tech components when needed, without rewriting large portions of the system. The most successful teams align tech choices with architectural principles rather than marketing buzzwords.

Modern technology layers and ecosystems: where best practices live

Technology today layers capabilities from infrastructure to applications. Cloud services, APIs, containers, and orchestration tools abstract away many manual tasks, allowing teams to focus on business value. AI-assisted tooling and data-driven platforms reshape how we build, test, and optimize software. However, new tech brings new risks: vendor lock-in, security considerations, and the need for governance. Teams that succeed map these layers to concrete non-functional requirements—performance, reliability, security, and compliance—ensuring that technology accelerates outcomes rather than adding drag. In this landscape, software and technology are inseparable: the right architecture leverages cloud-native services without sacrificing testability or portability.

Architecture and design patterns for durable software

Durable software relies on architectural decisions that promote decoupling, testability, and evolvability. Common patterns include domain-driven design for complex business rules, event-driven architectures for scalability, and microservices with bounded contexts to reduce coupling. Design considerations should account for cross-cutting concerns like logging, tracing, monitoring, and security. The technology layer should support these patterns rather than dictate them. By prioritizing stable interfaces, clear responsibilities, and well-defined APIs, teams can swap implementations or adopt new tech with minimal disruption. The synergy between strong architecture and thoughtful technology adoption is what preserves long-term maintainability as requirements change.

Quality practices: testing, CI/CD, and code reviews as guardrails

Quality is a product of process as much as code. Automated unit, integration, and performance tests catch regressions early, while continuous integration ensures that new changes integrate smoothly. Continuous delivery/continuous deployment (CD) pipelines automate build, test, and release steps, reducing manual error and accelerating feedback loops. Code reviews remain a powerful multiplier for learning, catching anti-patterns, and sharing domain knowledge. When software and technology intersect, you gain confidence that new tooling or platform services won’t compromise reliability. A robust quality discipline enables teams to adopt innovative tech with less risk and faster recovery if something goes wrong.

Security, privacy, and compliance as core requirements

Security must be embedded from the start, not bolted on later. Threat modeling, risk assessments, and secure-by-default configurations should accompany every architectural decision. Privacy considerations—data minimization, access controls, encryption, and auditability—shape how data flows through software and technology stacks. Compliance requirements vary by domain but share a common goal: protecting users and preserving trust. Integrating security and privacy into the design reduces the cost of remediation after incidents and helps sustain a credible product in the eyes of customers and regulators alike.

Data, analytics, and decision-making in software projects

Data-driven decisions improve both software quality and technology choices. Telemetry, logs, and metrics reveal how systems behave under real load, which informs architectural adjustments and capacity planning. Product analytics highlight user value, guiding feature prioritization and technical debt reduction. However, data must be collected and interpreted responsibly to avoid misinformed bets. By aligning data collection with clear hypotheses and outcomes, teams can calibrate their software and technology investments to maximize return on effort.

The role of cloud, APIs, and platform services in modern practice

Cloud platforms enable scalable infrastructure, flexible resource allocation, and global reach. APIs and service-oriented designs promote reusability and integration with external partners, while platform services like databases, messaging, and analytics simplify common needs. A prudent approach evaluates total cost of ownership, performance characteristics, and vendor support. The risk of vendor lock-in exists, so teams should design with portability in mind and maintain critical competencies in-house to respond to shifts in market or policy.

Balancing speed, scope, and sustainability in practice

Speed to market matters, but sustainable velocity wins in the long run. Teams should balance short-term delivery with long-term maintainability by prioritizing stable interfaces, careful debt management, and incremental architectural improvements. Decision frameworks—such as lightweight architecture review boards, roadmaps aligned to business outcomes, and risk-adjusted prioritization—help maintain a healthy balance between exploration of new tech and discipline in software delivery. The ultimate objective is to deliver value consistently while preserving the capacity to adapt as software and technology evolve.

Practical decision framework for teams: a step-by-step approach

To translate theory into action, start with a clear problem statement and success metrics. Map functional requirements to non-functional requirements (performance, security, resilience). Choose an architectural style that supports evolving needs, then select tech stacks and platform services that align with those goals. Establish governance that favors experimentation within safe boundaries and enforces quality through automated tests and code reviews. Regularly reassess choices as new information becomes available, ensuring that both software and technology decisions remain aligned with business value.

Real-world scenarios: small teams vs. large enterprises

Small teams often benefit from lean architectures and platform эти that speed iteration, while larger organizations prioritize governance, security, and enterprise-grade integrations. In smaller settings, opting for modular components and reusable services can deliver quick wins without sacrificing future flexibility. Larger teams should emphasize standardized patterns, comprehensive documentation, and a shared mental model for tech decisions to reduce fragmentation. Regardless of size, the goal remains consistent: align software quality with pragmatic technology choices to deliver reliable, scalable outcomes.

Getting started today: a 90-day action plan

Begin with a baseline assessment of current software quality and technology readiness. Create a backlog that separates architectural work from feature delivery, and set up a lightweight CI/CD pipeline if one is missing. Invest time in improving test coverage, defining clean interfaces, and creating a simple governance process for evaluating new tech. Schedule regular reviews to measure progress against defined metrics, and reserve time for refactoring to remove accumulating debts. By adhering to a disciplined 90-day plan, teams can realize meaningful improvements in both software and technology stacks.

Comparison

| Feature | Software-centric approach | Technology-driven platform |

|---|---|---|

| Primary focus | Quality of code, architecture, and maintainability | System maturity, integration, and extensibility |

| Time-to-market | Longer upfront design but smoother later changes | Faster iterations through reusable tech and services |

| Scalability | Depends on modular design and clear interfaces | Platform-scale through managed services and APIs |

| Cost of ownership | Higher upfront design costs, potential long-term savings | Lower upfront costs with ongoing platform and vendor fees |

| Risk and compliance | Controlled risk via testing and strong engineering practices | Complex risk due to dependencies, licenses, and governance |

Pros

- Promotes durable, well-tested code and clear architectures

- Encourages modularity and clean API design

- Improves long-term adaptability to new technologies

Weaknesses

- Higher upfront investment and longer planning cycles

- Requires disciplined governance to avoid bottlenecks

A balanced software-first and technology-enabled strategy wins

Prioritize solid software fundamentals while adopting adaptable tech where it unlocks value. This combo reduces risk, improves maintainability, and sustains velocity as technology evolves. The SoftLinked team believes this blended approach delivers durable outcomes.

Your Questions Answered

What is the difference between software and technology in this context?

Software refers to the code, architecture, and practices that produce the behavior of a system. Technology encompasses the tools, platforms, and services that enable that software to run, scale, and evolve. Together, they form a continuum where good software design enables effective use of advanced technology.

Software is the code and architecture; technology is the tools and platforms that make it run and scale.

Why are software fundamentals still important when new tech arrives?

Fundamentals like modularity, testing, and clean interfaces help you adapt to new tech without rewriting systems. They reduce complexity, improve reliability, and make migrations or integrations smoother as technology stacks change.

Solid fundamentals make it easier to adopt new tech without breaking things.

When should a team adopt AI or ML components?

Adopt AI/ML when there is clear value tied to data-driven decisions, a reliable data pipeline, and a measurable ROI. Start with small, well-scoped pilots before broader integration to minimize risk and avoid overengineering.

Only adopt AI when it clearly adds value and you have data pipelines.

How can small teams balance speed and quality?

Small teams should invest in essential automation (CI/CD, tests) and modular design to ship quickly without sacrificing quality. Prioritize features with high business impact and plan regular refactors to prevent debt from accumulating.

Ship fast with automation, but keep the code clean for future changes.

What metrics best reflect software quality and technology health?

Useful metrics include test coverage, code churn, MTTR (mean time to restore), deployment frequency, and system latency under load. Combine these with business metrics like feature delivery rate and user satisfaction to gauge overall health.

Track tests, reliability, and deployment speed to gauge health.

How should a company handle tech debt across teams?

Treat tech debt as a prioritized backlog item with clear acceptance criteria. Reserve time in sprints for debt reduction, and track debt ratio over time. Alignment across teams minimizes divergence and avoids repetitive fixes.

Plan debt work like any other feature with clear goals.

Top Takeaways

- Anchor decisions in software fundamentals first

- Leverage technology to accelerate delivery, not replace design

- Prioritize maintainability, testability, and clear interfaces

- Assess risk continuously and plan for governance

- Adopt a data-driven approach to measure impact