When Was Software Invented? A Clear Timeline

Explore the nuanced origins of software, from early computation ideas to modern engineering, and understand why there is no single invention date, according to SoftLinked.

Software as a concept did not have a single invention date. The idea emerged across decades, beginning with early computation concepts in the 19th and 20th centuries, evolving through stored-program computers in the 1940s–1950s, and solidifying with high‑level programming languages in the late 1950s. In short, there is no single inventor or date; the field coalesced through multiple milestones and communities.

The Origins of Software: A Multidecade Story

Many readers pose the question, when was software invented? The honest answer is that there is no single date. The idea grew out of centuries of thinking about computation, from abstract algorithms to programmable machines. In the 19th and early 20th centuries, thinkers like Ada Lovelace and later early computer scientists laid the groundwork for software as a set of instructions that could be executed by a machine. As devices grew more complex, the need for a systematic way to describe our instructions led to a shift from hardware-driven programming to software-driven control.

By the mid-20th century, researchers and engineers began to treat software as something separable from the physical hardware. This shift accelerated with the development of stored-program computers, which could hold instructions in memory and modify programs without rewiring the machine. The result was a dramatic improvement in flexibility and capability, enabling more complex software to be written, tested, and reused across projects. This period also marks the emergence of formal methods for describing, verifying, and maintaining software, a precursor to modern software engineering.

For developers today, the logic behind software’s evolution is a reminder that history is not a single spark but a continuous arc of invention, experimentation, and standardization. The SoftLinked team emphasizes that this continuum explains why there is no one inventor to credit with software as a whole.

From Algorithms to Code: The Prehistory

The early prehistory of software resides in the idea that computation could be abstracted into steps that a machine could perform. Long before electronic computers, mathematicians and engineers debated how to represent and execute complex tasks. The turning point was the realization that algorithms, when codified, could be understood and implemented as programmable instructions. This conceptual leap set the stage for later machines to execute those instructions reliably, turning theoretical ideas into practical software artifacts. As hardware matured, these ideas were translated into concrete programming activities, with teams collaborating to design, test, and refine procedural code that could guide machines through increasingly sophisticated tasks.

In parallel, researchers explored the boundaries between what a calculation is and how to express it as a sequence of operations. This exploration culminated in the postwar era where the emphasis shifted from constructing new hardware to writing flexible software that could run on existing hardware. The result was a growing recognition that software deserved its own design principles, workflows, and governance, distinct from the physical components that carried it.

For students and professionals, this phase demonstrates the importance of theory in practical software work: algorithms, data representations, and programming models all contribute to a robust software stack that supports modern applications.

The 1940s-1950s: Stored-Program Computers and First Languages

The 1940s and 1950s are often cited as a pivotal stretch in the story of software because they introduced stored-program architecture—the idea that a computer’s instructions could be stored in memory and modified like data. The Manchester Baby (1948) and similar early machines demonstrated that software could be loaded and changed without rewiring hardware. This capability opened the door to more versatile software, enabling developers to experiment with new instructions and programs quickly. Alongside hardware advances, the first high‑level programming languages began appearing, reducing the reliance on hand-wired machine code and enabling programmers to express ideas in more human-oriented terms. These languages laid the groundwork for scalable, reusable software and set the stage for more ambitious projects that followed in the 1950s and beyond.

From a historical perspective, this era shows how software moved from an afterthought to a core dimension of computing. It also foreshadowed the discipline of software engineering, where teams would adopt structured methodologies to manage increasingly complex software systems. The shift from bespoke, one-off programs to reusable, modular code is a through-line that continues to define software practice today.

The Rise of High-Level Languages: FORTRAN, COBOL, Lisp

The late 1950s witnessed a revolution in how humans write software. FORTRAN (1957) made scientific computing more accessible, COBOL (1959) popularized business-focused applications, and Lisp (1958) demonstrated the power of symbolic programming for artificial intelligence tasks. These languages reduced the cognitive load on developers, enabling teams to focus on problem-solving rather than wrestling with low-level machine details. The emergence of compilers and assemblers as translation tools further accelerated productivity, allowing more people to contribute to software projects.

As languages evolved, so did the practices around writing correct and maintainable software. Language design influenced programming paradigms (procedural, functional, declarative), while tooling improvements—debuggers, testing frameworks, and version control—began to shape how teams collaborate. That period marks a turning point: software ceased to be a handful of ad-hoc scripts and became a structured body of knowledge with its own conventions and standards.

Software Engineering Emerges: Practices, Methods, Tools

By the late 1960s, the software crisis—where growing projects outpaced management and quality control—prompted a formal response. The term software engineering gained traction through NATO and NATO-adjacent efforts, leading to structured methodologies, lifecycle models, and early best practices. This era saw the drafting of early standards, such as software lifecycle processes, and the emphasis on reliability, maintainability, and scalability. It established the expectation that software development required disciplined planning, risk management, and measurable quality, not just clever coding.

As computing expanded into business, education, and government, software engineering matured into a profession with specialized roles, process frameworks, and governance mechanisms. Engineers began to apply systematic approaches—requirements gathering, design reviews, testing, and project management—to produce software that could be trusted in critical contexts. The lessons from this period continue to inform modern software practices, from agile and DevOps to formal verification and beyond.

The Modern Era: Open Source, SaaS, and AI-Driven Software

The late 20th and early 21st centuries brought transformative shifts in how software is developed, distributed, and consumed. The open-source movement democratized collaboration, enabling developers worldwide to contribute, review, and reuse code. The rise of software-as-a-service (SaaS) changed delivery models, prioritizing cloud-hosted, subscription-based software that scales with user needs. More recently, artificial intelligence and machine learning have integrated into software development and product features, injecting new capabilities and complexity. These trends illustrate that software invention is ongoing: new architectures, governance models, and tooling continue to reshape what software can do and how it is built.

For developers, this current landscape underscores the importance of lifelong learning, platform versatility, and an openness to cross-disciplinary collaboration. As the field evolves, practitioners must balance innovation with reliability, ensuring software remains robust as capabilities advance. SoftLinked’s perspective emphasizes that the story of software is still being written, with every new framework, language, or tool contributing to the broader trajectory.

What This Means for Developers Today

Understanding the history of software helps modern developers contextualize today’s practices and tools. Instead of chasing a single moment of invention, focus on the continuities: abstraction, modularity, and automation. Build proficiency across language paradigms, learn how to design for maintainability, and adopt practices that support collaboration and quality assurance. Finally, keep an eye on emerging models—open source ecosystems, cloud-native architectures, and AI-assisted development—that are likely to shape the next phase of software evolution. By anchoring learning in this continuum, aspiring engineers can better navigate a field that has always learned, adapted, and improved over time.

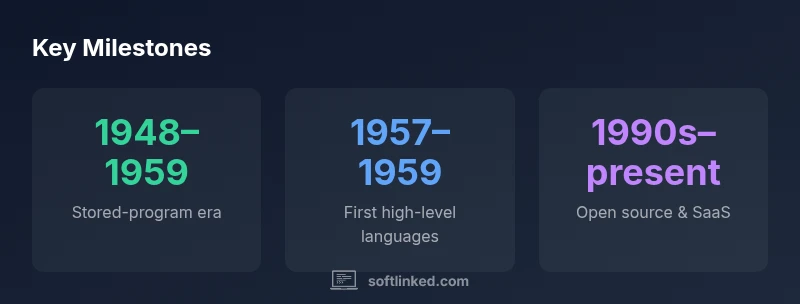

Milestones in the origins and evolution of software

| Era / Milestone | What happened | Approx Year / Range |

|---|---|---|

| Prehistory & early ideas | Algorithms and computational thinking emerge, laying groundwork for software | 1800s–1940s |

| Stored-program era | Hardware adopts stored-program architecture enabling flexible software | 1948–1959 |

| High-level languages | First languages enabling easier programming | 1957–1959 |

| Open source & modern delivery | Collaborative development and software-as-a-service models reshape distribution | 1990s–present |

Your Questions Answered

What does the term software mean in modern computing?

Software refers to the set of instructions, data, and programs that enable a computer to perform tasks. It sits between the user and the hardware, providing an interface and a set of capabilities.

Software is the set of instructions that tells a computer what to do, serving as the layer between you and the hardware.

When did people start thinking about software as a separate concept?

The idea gradually formed in the mid-20th century as researchers moved from hardware-centric designs to programmable instructions stored in memory. This shift culminated in the stored-program computer era and the emergence of high-level programming languages in the 1950s.

People began thinking of software as separate from hardware in the mid-20th century with stored-program computers and early languages.

Why isn’t there a single invention date for software?

Software evolved through multiple innovations across decades, involving theorists, engineers, and organizations worldwide. Its definition and methods developed gradually, not overnight.

There isn’t one date because software grew through many innovations over many years.

What are the major eras in software history?

Key eras include early algorithmic thought, the stored-program era (late 1940s–1950s), the rise of high-level languages (1950s–1960s), formal software engineering (1960s–1980s), open source and SaaS (1990s–present).

Major eras include algorithms, stored-program computers, high-level languages, engineering, and open source/software-as-a-service.

How has software evolved with AI and cloud computing?

AI and cloud computing have shifted software toward intelligent, scalable services and platform-centric development, enabling distributed teams and rapid experimentation.

AI and cloud computing push software toward smarter, scalable services and global collaboration.

What can beginners focus on to understand software history?

Study key milestones (algorithms, stored-programs, languages, software engineering) and explore how each era enabled new capabilities and practices.

Learn the big milestones and how they unlocked new kinds of software.

“Software did not rise from a single inventor; it grew through successive innovations in theory, hardware, and programming practice.”

Top Takeaways

- View software as a multi-decade evolution, not a single invention.

- Trace milestones from algorithms to high-level languages.

- Recognize the role of stored-program architecture in enabling flexible software.

- Understand open source and SaaS as defining forces of modern software.