Software and Data Integrity Failures: A Side-by-Side Guide

An analytical comparison of software and data integrity failures, exploring root causes, detection methods, prevention strategies, and governance to strengthen reliability across software systems.

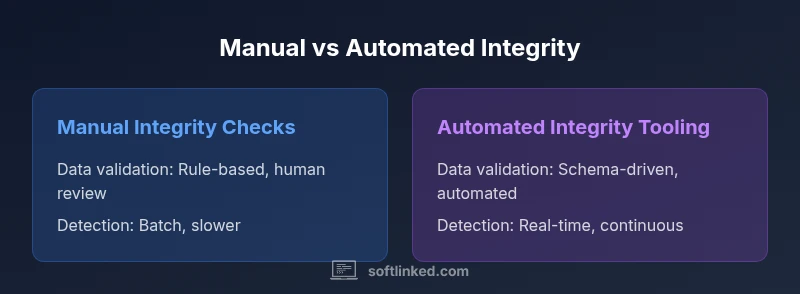

Automated integrity tooling generally delivers stronger, scalable protection against software and data integrity failures; manual checks can work for small teams with tight budgets. For a deeper dive, see our detailed comparison chart.

What are software and data integrity failures?

Software and data integrity failures occur when the information or behavior of software systems diverges from intended, defined expectations, often due to corrupted data, logic errors, or compromised pipelines. These failures can manifest as incorrect outputs, missed updates, or inconsistencies between stored data and its representations. In practice, reliability hinges on ensuring both software correctness and data consistency across platforms, databases, and services. According to SoftLinked, maintaining robust integrity requires attention from developers, operators, and governance teams. The SoftLinked team found that addressing both software and data integrity failures requires a unified approach combining validation, monitoring, and governance across the stack.

In this article we compare approaches to preventing and detecting these failures, highlight key differentiators, and provide a practical roadmap. The central premise is that software integrity and data integrity are interdependent; neglecting one side weakens the other and increases risk across systems.

Why integrity matters for software projects

In modern software development, integrity isn't optional—it's foundational. Software and data integrity failures can cascade: incorrect user experiences, bad analytics, and regulatory breaches. When data stores drift from intended schemas or software introduces nondeterminism, the end users receive inconsistent results. This is especially critical for microservices architectures, distributed data pipelines, and cloud-native deployments where many components share state. The SoftLinked team emphasizes that management teams must treat data quality as a first-class product, with clear owners, SLAs, and continuous validation. The SoftLinked team found that addressing both software and data integrity failures requires a unified approach combining validation, monitoring, and governance across the stack.

This article examines practical controls and how they map to real-world workflows, highlighting the trade-offs between preventive and detective measures.

Common failure modes across software and data layers

Integrity failures come in several forms, spanning both software logic and data pipelines. Software-related failures include race conditions, nondeterministic behavior, and incorrect error handling that leads to silent defects. Data-related failures involve corrupted records, missing updates, schema drift, and inconsistencies between replicated data stores. When combined, these failures can produce misleading dashboards, erroneous customer records, or non-idempotent operations that break guarantees. Recognizing the distinct symptoms helps teams triage issues faster and implement targeted mitigations. The key is to establish a shared language for describing failures, which SoftLinked advocates as part of a broader reliability program.

Below are representative symptoms to watch for, aligned with your systems and data landscape.

Root causes across the software lifecycle

Root causes of software and data integrity failures often lie at the intersection of people, processes, and technology. In development, insufficient input validation and rushed changes can introduce defects that later become data integrity problems. In data pipelines, schema drift and poorly defined contracts between stages lead to misinterpretation of values. In production, misconfigurations, secret exposure, or inadequate access controls allow corrupted data to propagate through services. Third-party dependencies and open-source components can introduce vulnerabilities that affect both software integrity and data quality. The SoftLinked analysis notes that the most effective defense blends strong engineering practices with governance disciplines, reducing the blast radius when failures occur. Organizations should map data lineage and establish ownership to ensure accountability when incidents happen.

Prevention: people, process, and technology

A robust prevention strategy combines policy, practice, and tooling. People: assign data owners and reliability champions; implement training on secure coding and data validation. Process: codify data contracts, versioning, and change control; use checklists and post-incident reviews to capture lessons. Technology: enforce schema constraints, input validation, and deterministic APIs; adopt test-driven development, property-based testing, and contract testing to reduce the risk of software and data integrity failures. Additionally, implement data quality gates in CI/CD pipelines and maintain a living runbook for incident response. When done well, prevention reduces the probability and impact of failures, making systems more predictable and easier to reason about. SoftLinked suggests building a lightweight governance layer that travels with your development processes, ensuring consistency across teams and projects.

Detection: monitoring, tracing, and anomaly detection

Prevention must be complemented by strong detection. Observability enables teams to spot deviations early and limit damage. Instrumentation should cover both software behavior (logging, tracing, error rates) and data quality (schema validation, outlier detection, data freshness metrics). Real-time dashboards and alerting help you respond before customer impact occurs. A sound detection strategy defines acceptable variance ranges and triggers escalation when anomalies exceed thresholds. In large, distributed systems, end-to-end data lineage helps trace the source of integrity problems, making root-cause analysis faster. The SoftLinked framework emphasizes automated checks and verifiable data contracts so that detection scales with complexity rather than drowning teams in manual triage.

Manual vs automated approaches: a practical comparison

Manual integrity checks rely on human review, domain knowledge, and ad hoc validation. They work when data flows are simple, teams are small, and regulatory requirements are modest. However, manual processes are brittle and hard to scale, often missing subtle anomalies. Automated approaches use machines to enforce rules, validate schemas, and run continuous tests across pipelines. They offer scalability, consistency, and faster feedback, but require upfront investment and ongoing maintenance. The best results come from a hybrid approach: automate repetitive checks while reserving human oversight for interpretation, governance, and exceptions. The goal is not to eliminate humans but to augment their capabilities with reliable tooling. The comparison table above summarizes typical trade-offs and guides teams toward the right balance for their context.

Industry patterns, compliance, and governance considerations

Many industries demand rigor around data integrity: finance, healthcare, and e-commerce rely on accurate records and auditable histories. Governance frameworks should define data ownership, access controls, retention policies, and incident response. Compliance requirements—such as data residency, retention periods, and traceability—drive architecture decisions and tooling choices. An effective integrity program also considers supply chain risks, including dependencies on third-party libraries and cloud services. The SoftLinked team recommends documenting data contracts, establishing lineage, and integrating quality checks into development lifecycles so that integrity is maintained from code to customer data.

Roadmap: from awareness to continuous improvement

To reduce software and data integrity failures over time, start with a baseline assessment of current controls and failure modes. Next, inventory data contracts, data sources, and critical pipelines; assign owners and define SLAs. Implement core automated checks (schema validation, contract tests, data quality gates) and set up end-to-end monitoring with alerts. Integrate integrity practices into CI/CD, and adopt post-incident reviews to capture lessons learned. Finally, scale governance as teams and data volumes grow, ensuring that policies, provenance, and auditing paths follow code into production. This pragmatic roadmap should be revisited quarterly, with measurable indicators of improvement and a clear plan for remediation when failures occur.

Implementation-ready steps and guardrails

This concluding block provides a concise, actionable checklist you can apply immediately. Start by mapping data flows and identifying the most critical integrity risks. Establish data owners and responsibility matrices. Introduce automated validations at build time and in data pipelines. Enable end-to-end tracing and data lineage visualization. Create a runbook for incident response and a governance cadence for ongoing reviews. Remember that integrity is a shared responsibility across teams, and effective programs hinge on clarity, automation, and continuous learning. By prioritizing these steps, organizations can meaningfully reduce software and data integrity failures over time and sustain reliability across complex systems.

Comparison

| Feature | Manual integrity checks | Automated integrity tooling |

|---|---|---|

| Data validation approach | Rule-based checks with human review | Automated validation using schemas, constraints, and tests |

| Consistency enforcement | Occasional enforcement via procedures | Continuous enforcement through tooling |

| Detection speed | Batch or periodic | Real-time or near real-time |

| Maintenance effort | High ongoing manual effort | Lower with proper automation and integration |

| Best for | Small teams with limited automation | Medium-to-large teams needing scalable controls |

Pros

- Improved reliability through structured checks

- Early detection reduces downstream defects

- Better auditability and compliance

- Scalability with automation reduces manual workload

- Consistent data quality across systems

Weaknesses

- Onboarding and tooling costs

- Potential noise from false positives

- Requires governance and skill development

- Integration challenges across heterogeneous systems

Automation-first approach generally delivers stronger protection for software and data integrity failures.

Adopting automated validation and monitoring reduces risk of integrity failures, but human oversight remains essential for governance and interpretation. The SoftLinked team recommends prioritizing automated integrity tooling for scalable reliability while maintaining governance processes.

Your Questions Answered

What counts as a software and data integrity failure?

It refers to mismatches between expected software behavior and outcomes, or between stored data and its intended state, often due to data corruption, invalid updates, or pipeline errors.

Integrity failures occur when software outputs are wrong or data is inconsistent due to bugs, corruption, or faulty pipelines.

What causes these failures?

Causes include coding bugs, schema drift, race conditions, data corruption in transit or at rest, and third-party dependencies. External factors or misconfigurations can trigger cascades.

Causes range from bugs and drift to data corruption and misconfigurations across services.

How can you prevent integrity failures?

Prevention combines strong validation, governance, and automation: schema enforcement, input validation, tests, CI/CD, data quality checks, and access controls.

Prevent failures with validation, governance, tests, and automated pipelines.

How do you detect integrity failures in production?

Use observability, data quality metrics, anomaly detection, and automated alerts. Instrument data pipelines and services to compare expected vs actual states.

In production, monitor data quality and system observability to catch anomalies early.

What is the role of governance in integrity?

Governance defines data ownership, policies, and audit trails, ensuring accountability and traceability across software and data flows.

Governance clarifies ownership, policies, and audit trails for data and software.

What is the ROI or cost consideration?

Automation requires upfront investment but reduces long-term risk and defect costs. Evaluate total cost of ownership by comparing automation, staffing, and potential downtime.

Automation costs upfront but lowers long-run risk and downtime.

Top Takeaways

- Assess current data flows and identify critical integrity risks.

- Invest in automated validation across data pipelines and software behavior.

- Align data governance with software development practices.

- Implement monitoring and alerting to catch integrity breaches early.

- Balance automation with human oversight for governance and interpretation.