How to Prevent Software: A Practical Guide for Developers

Learn practical, battle-tested methods to prevent software defects and outages with disciplined development, testing, monitoring, and incident readiness—recommended by SoftLinked.

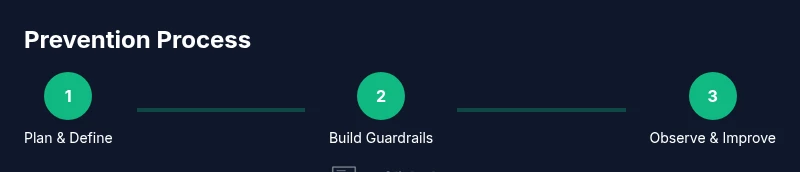

To prevent software issues, adopt a proactive lifecycle: plan with requirements and risk modeling, design defensively, code defensively, run automated tests, and continuously integrate. In production, monitor, alert early, and rehearse incident response. Emphasize clear ownership and regular design reviews to catch issues before they escalate, improving reliability and resilience.

How to prevent software: A practical goal

Preventing software problems starts with a clear, shared goal across product, engineering, and operations. This article explains what the phrase how to prevent software means in practice and how teams align on reliability, security, and maintainability. According to SoftLinked, successful prevention begins with disciplined planning, risk awareness, and a culture that treats quality as a continuous responsibility. When teams define success metrics early and establish ownership, we reduce rework and speed up delivery. The concept of how to prevent software is not a single technique; it is a holistic mindset that combines people, processes, and tooling to prevent defects before they reach customers. Throughout this guide, you will see practical patterns, evidence-based approaches, and a clear implementation path tailored for developers and teams at all levels.

Core pillars for preventing software issues

Preventing software problems rests on a few enduring pillars. First, establish explicit requirements and risk modeling so every feature carries a testable goal. Second, design defensively with modular boundaries and fault isolation. Third, implement automated testing and robust QA to catch issues early. Fourth, apply continuous integration and disciplined deployment to minimize drift. Fifth, invest in observability—metrics, logs, and traces—to identify root causes quickly. Sixth, rehearse incident response so teams know exactly what to do during outages. SoftLinked analysis shows that teams with clear ownership and feedback loops reduce defect leakage and accelerate recovery. Finally, maintain good documentation and a culture of learning to close the loop on every release.

Defensive coding practices

Defensive coding is the backbone of prevention. Start with input validation and strict type checks, fail-fast error handling, and idempotent operations to prevent repeated side effects. Use feature flags to decouple release timelines from code changes, allowing safe experimentation. Apply small, well-scoped functions to reduce complexity and improve testability. Always consider the worst-case scenario and implement safe defaults. Write clear error messages that help diagnose the problem without exposing sensitive data. By embracing defensive coding, teams reduce the blast radius of bugs and make recovery faster.

Versioning, dependencies, and reproducible builds

Effective prevention requires reliable control over dependencies and build reproducibility. Enforce semantic versioning, pin critical libraries, and audit transitive dependencies regularly. Use lockfiles and deterministic builds so every install yields the same results. Maintain an up-to-date changelog and a clear upgrade path, testing each dependency change in a controlled environment before shipping. Reproducible builds simplify troubleshooting and rollback, and they make automated rollbacks a practical option during incidents.

Testing and QA: static, dynamic, and property tests

A robust test strategy is essential for prevention. Combine static analysis with dynamic tests to catch issues at both compile-time and run-time. Unit tests verify individual components, integrations tests validate interactions, and performance/load tests ensure the system scales under pressure. Property-based testing helps uncover edge cases by exploring input spaces automatically. Maintain a living test suite that evolves with the codebase, and treat tests as a first-class product—refactor and expand them alongside features. Regularly run tests in a dedicated environment that mirrors production.

Continuous integration and deployment patterns

CI/CD pipelines are powerful guards against drift. Integrate automated tests, linting, and security checks into every pull request, failing builds that violate policy. Use staging environments that replicate production workloads and run canary deployments to observe behavior before full rollout. Implement blue/green or rolling deployments to minimize user impact during releases. Ensure rollback procedures are automated, documented, and tested so teams can recover quickly if something goes wrong.

Architecture for resilience: modularity, fault tolerance, and redundancy

Prevention thrives in a resilient architecture. Favor loose coupling, clear service boundaries, and well-defined API contracts to limit cascading failures. Implement circuit breakers, timeouts, and retries with backoff to handle partial failures gracefully. Use redundancy for critical components and data, along with graceful degradation so the user experience remains usable under failure. Document scalability patterns and maintain decoupled data stores to avoid single points of failure. A well-structured architecture reduces the likelihood of widespread outages and speeds diagnosis when issues occur.

Security by design and threat modeling

Security is a preventative discipline, not an afterthought. Incorporate threat modeling early in the design phase to identify potential attack surfaces and prioritize mitigations. Apply secure coding practices, regular credential hygiene, and least-privilege principles across services. Integrate security checks into the CI/CD pipeline and enforce automated remediation where feasible. By thinking about attackers and safeguards from day one, teams prevent many vulnerabilities from becoming exploitable issues later.

Observability: monitoring, logs, tracing, and dashboards

Observability is essential for prevention. Instrument code with meaningful metrics, structured logs, and distributed tracing to understand how systems behave under real workloads. Create dashboards that highlight latency, error rates, and saturation, and set up alerts for anomalous patterns. Establish runbooks that guide on-call engineers through triage steps and root-cause analysis. Observability turns incidents into learnings and helps teams prevent recurring problems.

Incident response and disaster recovery planning

Preparedness minimizes impact when problems arise. Establish runbooks covering detection, triage, containment, and recovery procedures. Ensure on-call rotations are clear, with escalation paths and post-incident reviews to capture lessons learned. Regularly rehearse incident scenarios to keep teams ready, reduce mean time to detection, and shorten recovery time. Disaster recovery planning should specify data backup practices, restore procedures, and acceptable downtime targets.

Data quality, migrations, and schema evolution

Data quality is foundational to prevention. Enforce data validation rules, enforce schema contracts, and version data migrations carefully to avoid breaking changes. Employ backward-compatible migrations and rolling data transformations to minimize outages. Maintain migration scripts in version control with thorough review. Poor data handling often hides in the shadows of a system; ensuring data integrity dramatically reduces post-release defects.

People and process: code reviews, knowledge sharing, and culture

People drive prevention. Implement structured code reviews that focus on correctness, readability, and maintainability. Promote knowledge sharing through pair programming, documenting design decisions, and maintaining a living architecture glossary. Foster a culture of blameless post-incident reviews and continuous improvement. When teams align on goals, share learnings, and celebrate preventive practices, the overall quality and resilience of software improve steadily.

Common pitfalls when trying to prevent software issues

Many teams stumble by over-engineering or chasing every possible edge case, which slows delivery and creates maintenance burdens. Others underinvest in testing, observability, or incident drills, leaving gaps that become expensive later. Misalignment between product and engineering can derail prevention efforts, as can brittle dependencies and unclear ownership. By avoiding these pitfalls and sticking to a pragmatic, prioritized plan, teams maintain momentum while improving quality.

Getting started today: a practical checklist

Begin with a small, focused upgrade: establish a baseline test suite, define a basic CI/CD gate, and set up simple monitoring on a single service. Expand to include security checks and threat modeling for new features. Add runbooks and documentation, then iterate monthly based on incident learnings. This gradual, disciplined approach turns prevention from abstract goal into measurable practice.

The SoftLinked perspective: a concise synthesis

From SoftLinked’s viewpoint, prevention is a continuous journey that blends design discipline, automated validation, and proactive operations. The team emphasizes clarity of ownership, incremental improvements, and a culture that treats quality as a shared responsibility. Following these patterns supports resilient software that serves users reliably and securely.

Tools & Materials

- Integrated Development Environment (IDE)(Choose one with strong refactoring and debugging support)

- Static Analysis Tool(Early detection of quality and security issues)

- Unit Testing Framework(Foundation for regression safety)

- CI/CD Platform(Automates builds, tests, and deployments)

- Version Control System(Centralizes code changes and collaboration)

- Observability/Monitoring Platform(Logs, metrics, and traces for detection)

- Runbooks and Incident Templates(Guides on-call and incident response)

- Dependency Management Tool(Track upgrades and compatibility)

- Documentation Tooling(Captures design decisions and procedures)

Steps

Estimated time: 8-12 weeks

- 1

Define objectives and success metrics

Clarify reliability, security, and maintainability goals. Establish measurable success criteria that teams can track over time.

Tip: Link metrics to user impact to keep focus on value. - 2

Map requirements to tests

For each feature, identify acceptance criteria and test cases that validate correct behavior under edge cases.

Tip: Write tests before or alongside feature development. - 3

Establish coding standards

Define naming, error handling, and architectural guidelines to reduce ambiguity and drift.

Tip: Enforce standards via automated checks in CI. - 4

Set up baseline test suite

Create a foundational set of unit and integration tests that cover critical paths.

Tip: Avoid overfitting tests to a single release; focus on stability. - 5

Configure CI/CD gates

Implement automated linting, tests, and security checks that block risky changes from merging.

Tip: Gradually raise gate strictness as confidence grows. - 6

Design for modularity

Partition the system into clear, loosely coupled components with stable interfaces.

Tip: Prefer adapters to direct dependencies to minimize ripple effects. - 7

Incorporate threat modeling

Identify potential attack surfaces early and prioritize mitigations in design.

Tip: Update models as features evolve or risks change. - 8

Instrument observability

Add meaningful metrics, structured logs, and distributed traces from day one.

Tip: Use consistent schemas and naming for easier analysis. - 9

Create runbooks

Document incident response steps, escalation paths, and recovery procedures.

Tip: Review runbooks quarterly or after major incidents. - 10

Institution code reviews

Institute formal code reviews focusing on correctness, readability, and risk.

Tip: Include reviewers from multiple domains (security, reliability, UX). - 11

Manage dependencies

Audit and pin important libraries; test upgrades in a controlled environment.

Tip: Automate dependency checks to catch breaking changes early. - 12

Practice incident post-mortems

Conduct blameless reviews to identify root causes and prevent recurrence.

Tip: Translate findings into concrete, tracked improvements. - 13

Iterate and improve

Regularly review processes and tooling; adjust based on new insights and user feedback.

Tip: Treat prevention as an ongoing program, not a one-off project. - 14

Roll out the program

Scale successful practices to teams and services gradually with governance and champions.

Tip: Start small, prove value, then expand systematically.

Your Questions Answered

What does it mean to prevent software?

Preventing software means proactively designing, building, and operating systems to minimize defects, outages, and security risks. It combines good architecture, automated testing, secure coding, and effective incident response.

Preventing software means building and operating a system so it fails less and recovers quickly when needed. It blends good design, tests, security, and ready-to-run incident plans.

Why is testing essential for prevention?

Testing validates that the software behaves as intended under a variety of conditions. It prevents defects from reaching users and helps teams detect regressions early, reducing risk in production.

Testing catches problems before users see them and helps avoid regressions after changes.

How long does it take to implement preventive practices?

Implementation is gradual and ongoing. Start with a baseline for a single service, then expand to more services as teams gain confidence and measure impact.

It’s an ongoing effort that grows with your team.

Do these practices apply to legacy codebases?

Yes. Start with safe upgrades, add tests for critical paths, and introduce observable metrics. Refactoring should be incremental to reduce risk.

Legacy code can be improved step by step with tests and monitoring.

What is the role of incident post-mortems?

Post-mortems identify root causes and action items. They help prevent recurrence by turning incidents into concrete improvements.

Post-mortems turn incidents into concrete improvements.

How do you measure prevention success?

Use leading indicators like defect leakage rate, mean time to detect, and time-to-recover, along with user impact. Tie these metrics to ongoing initiatives.

Track leakage, detection, and recovery times to gauge prevention impact.

Watch Video

Top Takeaways

- Define clear reliability goals and ownership.

- Automate tests and deployments to prevent drift.

- Instrument observability for rapid detection and learning.

- Incorporate security and threat modeling from day one.

- Use blameless post-incident reviews to drive improvement.