How to Make AI Software: A Practical Guide for Developers

Learn how to make AI software with a structured, step-by-step approach covering planning, data strategy, model selection, development, evaluation, and deployment for reliable AI systems.

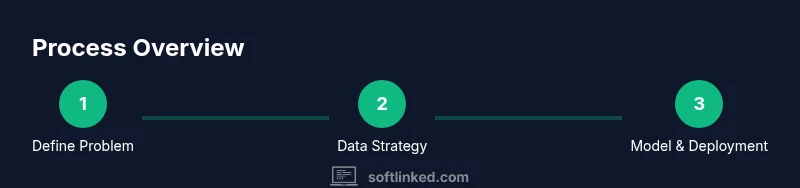

Follow a clear, step-by-step path to make AI software: define the problem, assemble quality data, select appropriate models, build robust pipelines, evaluate rigorously, and deploy with monitoring. This guide helps developers translate ideas into runnable AI software, with governance and safety baked in from the start.

Understanding the Problem and Goals

How to make ai software begins with precise problem framing. Define the task, identify stakeholders, and set measurable success criteria. In practical terms, write a short problem statement, list expected inputs and outputs, and determine performance targets. This aligns engineering effort with business value and reduces scope creep. According to SoftLinked, many teams falter when goals aren’t specific enough, leading to scope drift and unreliable results. Start by answering: what decision or automation will this AI system support? what constraints exist (privacy, latency, budget)? and how will you measure success (accuracy, recall, impact). A well-scoped project guides data needs, model choice, and evaluation methods, ensuring you build toward a trustworthy solution from day one.

Designing an AI Software Plan

A robust plan outlines architecture, data governance, and development milestones. Create a high-level diagram showing data flow, model components, deployment targets, and monitoring. Define governance policies for data lineage, privacy, and bias mitigation. Establish success metrics for training, validation, and real-world performance. Build a lightweight prototype to validate core assumptions before heavy investment. This planning phase reduces rework and clarifies what “done” looks like. The SoftLinked team emphasizes documenting decisions and trade-offs to preserve clarity as requirements evolve.

Data Strategy: Datasets, Labeling, and Ethics

Data is the lifeblood of AI software. Map data sources, labeling schemas, and quality checks. Decide on data collection methods, storage, and privacy protections. Create a data catalog that records provenance, versioning, and access controls. Establish data quality gates to prevent garbage-in, garbage-out scenarios. Include ethical considerations: consent, fairness, and representativeness. SoftLinked analysis highlights that data quality often drives model performance far more than algorithm tweaks; start with a clean, diverse dataset and build from there.

Model Selection: When to Use LLMs vs. Custom Models

Choose a modeling approach that aligns with the problem and data. Large language models (LLMs) can power conversational agents and code assistants, while smaller, task-specific models may offer lower latency and cost. Consider transfer learning, fine-tuning, and prompt engineering where appropriate. Evaluate constraints like inference speed, memory usage, and deployment environment. This decision shapes data preprocessing, feature engineering, and evaluation strategies. A thoughtful mix of models often yields the best balance between capability and efficiency.

System Architecture: Components and Interfaces

Outline a modular, scalable architecture. Break the system into data ingestion, preprocessing, model inference, business logic, and user-facing services. Define clear interfaces (APIs, events, and data contracts) to decouple components and enable independent testing. Plan for observability: logging, tracing, and metrics collection across the stack. Ensure the design supports rollback, feature toggles, and safe partial failures. A well-structured architecture accelerates maintenance and upgrades as models drift or requirements change.

Development Lifecycle: Tools, Environments, and Pipelines

Set up a repeatable development lifecycle with version control, containerization, and continuous integration. Use environments that mirror production for testing: dev, staging, and prod with infrastructure as code. Build data pipelines with reproducible steps, dataset versioning, and automated checks. Track experiments and parameters to enable reproducibility. Choose a cloud or on-prem solution that fits cost, compliance, and performance needs. This lifecycle discipline reduces surprises during training and deployment.

Evaluation and Validation: Metrics, Testing, and Safety

Evaluate both technical and real-world performance. Use appropriate metrics such as accuracy, precision, recall, F1, ROC-AUC, and calibration, depending on the task. Run ablation studies and error analysis to understand failure modes. Implement robust testing: unit tests for data processing, integration tests for end-to-end flows, and load tests for inference. Safety checks include bias auditing, adversarial testing, and monitoring for anomalous outputs. Document evaluation results and establish pass/fail criteria before production.

Deployment and Monitoring: MLOps Essentials

Prepare a production-ready pipeline: model packaging, inference endpoints, versioning, and rollback plans. Implement continuous deployment with canary or blue-green strategies to minimize risk. Monitor performance in real time: latency, throughput, error rates, and drift indicators. Set up alerting for data drift, model decay, or unexpected outputs. Ensure governance controls for privacy, security, and access. A well-instrumented deployment reduces operational risk and builds user trust.

Ethics, Compliance, and Security Considerations

AI software must respect user rights and comply with regulations. Build privacy-preserving data handling, explainability where feasible, and bias mitigation into model design. Apply secure coding practices, threat modeling, and regular security reviews. Maintain audit trails for data usage and model decisions. Ethical considerations should shape design choices from the start rather than as an afterthought.

Getting Started: Practical Roadmap

Begin with a small, well-defined pilot project that demonstrates the end-to-end flow from data ingest to user-facing outputs. Create a lightweight data catalog, a minimal viable model, and a basic monitoring setup. Iterate quickly, incorporating feedback from stakeholders and end users. The SoftLinked team recommends starting with a single, high-impact use case to validate assumptions and establish a reusable pattern for future projects. As you scale, formalize governance and expand testing across data, models, and deployment.

Tools & Materials

- Compute environment (GPU-enabled or cloud)(Sufficient GPU/TPU for training; consider cost and scalability)

- Programming language: Python 3.x(Prefer 3.8+ with data science libraries)

- ML frameworks: PyTorch or TensorFlow(Choose based on team familiarity and project needs)

- Data sources and labeling tools(Public datasets, synthetic data, and labeling platforms)

- Version control (Git) and hosting (GitHub/GitLab)(Traceable changes and collaboration)

- Experiment tracking (MLflow, WandB, or similar)(Capture hyperparameters and results for reproducibility)

- Containerization (Docker) and CI/CD for ML(Reproducible environments and automated testing)

- Monitoring & observability tools(Telemetry for latency, drift, and outputs)

- Security and privacy tooling(Data encryption, access controls, and threat modeling)

Steps

Estimated time: 6-12 weeks

- 1

Define the problem and success criteria

Articulate the task, stakeholders, and measurable outcomes. Create a problem statement and outline acceptance criteria for data, model performance, and deployment.

Tip: Write your criteria as testable hypotheses. - 2

Map data requirements and sources

Identify datasets, labeling needs, privacy constraints, and data quality checks. Document provenance and versioning for traceability.

Tip: Prioritize data quality and representativeness early. - 3

Design the system architecture

Draft modular components: data ingestion, preprocessing, model, inference, and UI. Define interfaces and data contracts.

Tip: Aim for loose coupling and clear boundaries. - 4

Choose a baseline model strategy

Decide on a baseline approach (LLM, specialized model, or hybrid) based on task, data, and latency needs.

Tip: Start with a simple, interpretable baseline. - 5

Build data pipelines and preprocessing

Implement data collection, cleaning, transformation, and feature engineering. Ensure reproducibility and logging.

Tip: Automate quality checks and version data pipelines. - 6

Implement model development

Set up training scripts, evaluation hooks, and safety guards. Track experiments with parameters and metrics.

Tip: Keep code modular for easy experimentation. - 7

Train and validate the model

Run training with held-out validation and cross-validation as appropriate. Watch for overfitting and data leakage.

Tip: Use early stopping and regularization where needed. - 8

Evaluate performance and safety

Assess metrics, fairness, bias, robustness, and user impact. Document risks and mitigations.

Tip: Run adversarial testing to reveal weaknesses. - 9

Build deployment pipeline

Package the model, create endpoints, and set up CI/CD. Define rollback strategies and feature flags.

Tip: Test end-to-end in a staging environment. - 10

Monitor, iterate, and improve

Monitor production outputs, drift, and user feedback. Schedule periodic retraining and model upgrades.

Tip: Automate anomaly detection and alerting. - 11

Governance and compliance checks

Ensure privacy, security, and ethics controls are enforced in production.

Tip: Keep an auditable trail of data usage. - 12

Scale responsibly and document lessons

Plan for scaling with modular components and updated governance. Capture learnings for future projects.

Tip: Create templates and playbooks for reuse.

Your Questions Answered

What is AI software?

AI software uses artificial intelligence to perform tasks typically requiring human intelligence. It combines data, models, and software layers to automate decisions or provide insights. Real-world examples include recommendation systems, chatbots, and predictive analytics.

AI software uses intelligent models to automate decisions or provide insights. Examples include chatbots and recommendations.

What are common steps to build AI software?

Common steps include framing the problem, collecting and preparing data, selecting and training models, building deployment pipelines, evaluating performance, and monitoring in production. Each step should have measurable criteria and governance.

Define the problem, get data, choose a model, deploy, and monitor.

Which languages are best for AI software?

Python is the dominant language for AI due to its rich ecosystem. Other languages like Java, C++, and Julia are used in performance-critical components. The choice depends on the task, team expertise, and deployment constraints.

Python is common for AI, with other languages for performance-critical parts.

How do you deploy AI models safely?

Safe deployment requires monitoring, versioning, rollback plans, and governance checks. Use canary deployments, automated testing, and drift detection to reduce risk in production.

Use monitoring, versioning, and canary deployments to stay safe in production.

What ethical considerations matter for AI software?

Ethical considerations include fairness, transparency, privacy, and accountability. Design with bias mitigation, data minimization, and user consent in mind from the start.

Ethics include fairness, privacy, and transparency in design and data use.

How long does it take to build AI software?

Timelines vary by scope. A focused pilot can take weeks, while full-scale AI software with governance and monitoring may take months. Plan iteratively with clear milestones.

timetables vary; start with a focused pilot and iterate.

Watch Video

Top Takeaways

- Define clear success criteria before coding.

- Invest in robust data governance and privacy.

- Adopt modular design and test components early.

- Monitor models continuously in production for drift.

- Address ethics, fairness, and safety throughout the lifecycle.