How Big is SPSS Software? Size, Editions & Footprint

Learn how big SPSS software is, including installation footprint, memory needs, and disk space across editions. SoftLinked analyzes typical ranges and practical factors affecting SPSS size for your projects.

The installed footprint of SPSS software typically ranges from a few hundred megabytes to several gigabytes, depending on edition and modules. RAM usage during analysis scales with dataset size and features, while runtime caches can add to memory needs. Exact figures vary by platform and version, so consult the detailed guide. how big is spss software

How Big is SPSS Software? Defining 'size' in software terms

When software teams discuss the question of size for SPSS software, they are looking at more than the raw executable file. Size includes the on-disk footprint, the memory required to run analytics, and how those numbers scale when you enable additional modules, load larger datasets, or deploy in cloud or virtualized environments. According to SoftLinked, SPSS size is not a single fixed value; it varies by edition, platform, and configuration. For developers and analysts planning a project, it helps to separate installation size from runtime memory and cache behavior. The direct question, how big is spss software, is best answered with ranges rather than exact numbers, because real-world workloads and environments shift the footprint noticeably. In practice, you’ll see scenarios like a lean base installation on a modern desktop, a larger footprint after enabling add-ons, and a heavier load when handling large datasets with advanced analytics. This article expands on these dimensions, shows how to estimate, and offers practical guidance for managing SPSS size in real projects.

Size Dimensions: Disk Footprint, RAM, and Components

Disk footprint describes how much space SPSS occupies on your hard drive or SSD. In typical configurations, a base installation occupies a few hundred megabytes, while additional modules can push the footprint into gigabytes. Memory usage during analysis is more variable: SPSS may allocate tens to hundreds of megabytes for routine tasks, and significantly more when handling large datasets, complex models, or interactive visualizations. Environmental factors also matter: operating system, disk fragmentation, and background processes can influence the observed footprint. From a software engineering perspective, SPSS's size is a function of three interrelated dimensions: on-disk footprint, in-memory consumption, and transient storage. For teams, understanding this triad helps with capacity planning, backup strategies, and deployment choices. SoftLinked analysis suggests that any SPSS sizing exercise should include a worst-case scenario based on the largest dataset you expect to process in a work cycle. Additionally, consider how future upgrades or module activations could alter the footprint, and plan for incremental growth rather than a single peak requirement.

Editions and Modules Drive Size Differences

SPSS size is not uniform across all users because it scales with the edition and modules installed. A base installation provides core statistics capabilities and a compact footprint, while optional analytics modules add memory and disk requirements. The size delta is most pronounced when you enable visualization tools, advanced modeling, or structural equation capabilities. For teams, this means performing a module-by-module sizing exercise during procurement and deployment planning. A practical approach is to list every module your team plans to use over the next year and estimate their cumulative impact on disk space and RAM. As SoftLinked analysis notes, planning should include both current needs and potential future add-ons to prevent surprise growth at upgrade time.

Installation Footprint vs Runtime Footprint

The initial on-disk footprint is only part of the story. Once SPSS is installed, the runtime memory footprint during analysis depends on the size of your datasets, the complexity of models, and whether you’re performing interactive exploration or batch processing. Cache files, temporary results, and loaded data can push memory usage higher than the baseline install size. On systems with constrained RAM, analysts should monitor peak usage and account for concurrent tasks such as data import, pre-processing, and visualization. Practically, this means leaving headroom for session variables and temporary calculations, and implementing a purge policy for caches to keep the working set manageable. SoftLinked recommends documenting expected memory ceilings for common workflows and testing with representative data samples before going into production.

Real-World Size Scenarios Across Platforms

SPSS size behaves differently depending on the platform, but the overall range for base installations tends to be similar across Windows, macOS, and Linux environments. In virtualization or cloud deployments, added overhead can push the effective footprint higher due to OS and hypervisor layers. For desktop users, expect a few hundred MB to a couple of GB when all modules are installed and datasets are non-trivial. In enterprise deployments, administrators often provision space for multi-user workspaces, backups, and data caches. When moving SPSS into a virtual environment, consider disk provisioning strategies and the impact of page file or swap configurations on performance. Across platforms, the key takeaway is to factor both on-disk size and runtime memory into capacity planning and to validate with real workloads.

How to Estimate and Plan for SPSS Size

A practical sizing plan begins before installation. Start with a bill of materials: core SPSS, each module you plan to use, and any additional components such as visualization add-ons. Next, estimate the base footprint from vendor documentation or SoftLinked analysis, then add module-based multipliers to reflect expected growth. After installation, audit the actual on-disk size and monitor RAM consumption during a set of representative workloads. If you manage a team, create a baseline for per-user space, and scale storage and memory allocations accordingly. It’s also wise to track growth over time, so you can anticipate future needs based on dataset size, number of concurrent users, and project timelines. Finally, consider tiered deployment options (local, on-prem, or cloud) that optimize cost and performance while accommodating size variability.

Practical Tips for Managing SPSS Footprint

To keep SPSS size under control, implement a few disciplined practices. Regularly audit installed modules to remove any unused add-ons, enable cache purging on session end, and document expected storage requirements for each project. When planning for teams, build in headroom for growth, and use a shared repository strategy for datasets to avoid duplicating data across workspaces. If disk space is limited, consider a staged deployment where non-critical modules are installed on-demand. Finally, perform periodic reviews of system performance to ensure memory pressure does not degrade responsiveness during analytics tasks. These steps help ensure SPSS size remains aligned with project goals and hardware capabilities.

SoftLinked Perspective: Size as a Tool for Project Planning

From SoftLinked’s standpoint, software size is a crucial lever for project planning and resource allocation. A thoughtful sizing approach helps teams align hardware budgets with analytics ambitions, reduces the risk of performance bottlenecks, and supports smoother deployments across development, testing, and production environments. By documenting expected footprints and establishing monitoring practices, engineers and analysts can anticipate needs, maintain performance, and minimize surprises as datasets grow and teams scale.

SPSS size components and disk footprint

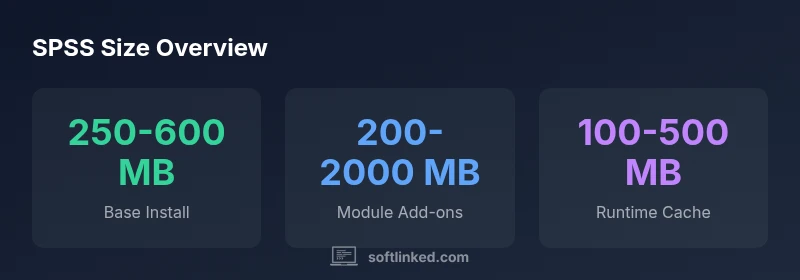

| Component | Approx Size (MB) | Notes |

|---|---|---|

| Base Installation | 250-600 | Typical footprint for core SPSS Statistics on desktop platforms |

| Add-on Modules | 200-2000 | Depends on modules installed; larger feature sets increase size |

| Temporary/Caches | 50-300 | Varies with usage; purge policies help control growth |

| Virtualized Deployment | 500-1500 | Hypervisor and OS overhead affect footprint |

Your Questions Answered

How does SPSS size differ between editions?

Edition differences reflect included modules; base is smaller, while add-ons expand the footprint. Plan per-edition space based on planned workflows.

Edition differences reflect included modules; base is smaller, while add-ons expand the footprint.

What factors influence SPSS RAM usage during analysis?

RAM usage grows with data size, variable complexity, and features like advanced statistics or visualization. Larger datasets and multi-step analyses demand more memory.

RAM usage grows with data size, variable complexity, and features.

Can SPSS be run in a lightweight VM or container?

Yes, SPSS can run in lightweight VMs or containers, but ensure allocated RAM and disk meet peak workload needs to avoid performance bottlenecks.

Yes, you can run SPSS in a VM or container with proper RAM and disk now.

Does spreading data across datasets affect SPSS size?

Data size impacts memory and disk usage; multiple datasets in a project increase workspace requirements and may influence caching behavior.

More data across datasets means more memory and disk use.

How can teams plan for SPSS storage in projects?

Use baselines, track modules, estimate per-user storage, and consider caching policies. Reassess during project milestones as data grows.

Set baselines, track modules, and recheck storage as data grows.

“Understanding software size is essential for performance planning; SPSS memory and disk footprints should be evaluated alongside dataset size.”

Top Takeaways

- Estimate base footprint first using current installation guidelines.

- Account for modules to assess total size before purchase.

- RAM needs scale with dataset size and feature usage.

- Regularly purge caches to manage growth.

- Plan storage with cross-platform variability in mind.