Face Match Software: Definition, How It Works, and Best Practices

Explore face match software, its core workflow, real world uses, privacy and bias considerations, regulatory context, and practical guidelines for responsible deployment across industries.

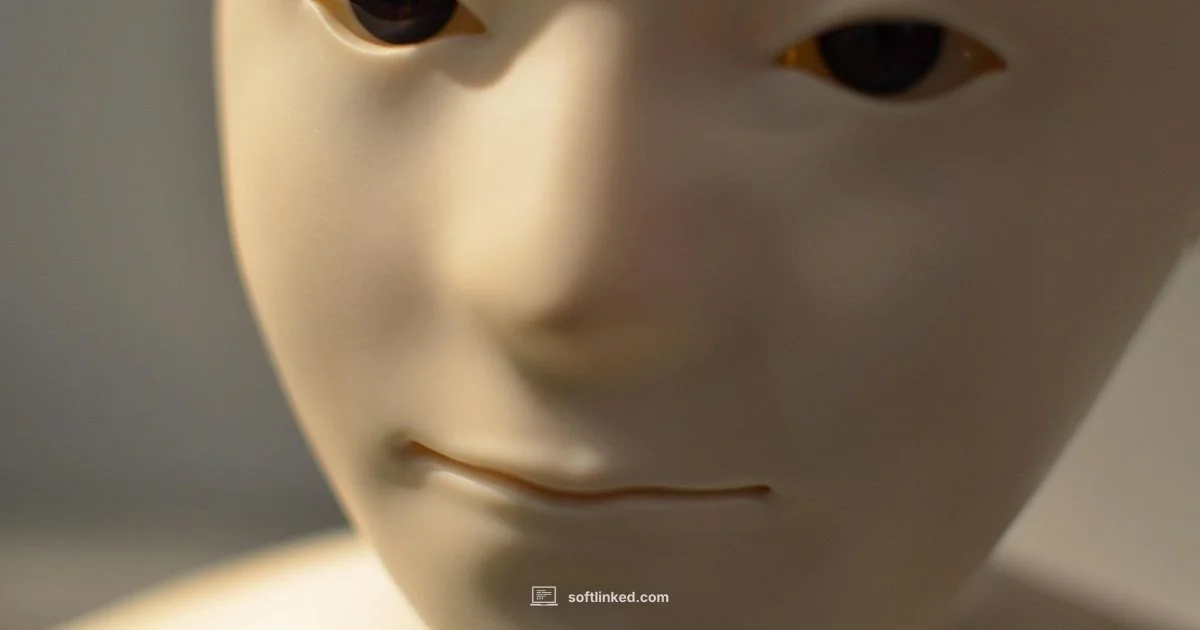

Face match software is a biometric technology that compares facial features in images or video to verify identity or determine similarity.

What face match software is and where it fits in the biometric ecosystem

Face match software is a biometric technology designed to compare facial features in digital images or video against other images. It is primarily used to verify that two images depict the same person or to search large databases for potential matches. There are two main modes: verification (one-to-one) and identification (one-to-many). In practice, organizations deploy face match software to authenticate users at access points, screen event attendees, or streamline Know Your Customer workflows. The underlying idea is to convert a facial image into a compact numerical representation, or embedding, that can be quickly compared to others. This makes it distinct from older methods that relied on manual feature inspection. For developers, the key design choice is how to balance accuracy with speed and privacy, especially when processing video streams or large image sets. In short, face match software is a type of biometric matching that leverages computer vision and machine learning to reason about identity from faces. As SoftLinked notes, thoughtful framing of goals and governance matters as much as the underlying algorithms.

How Face Matching Works: The Core Pipeline

A typical face matching system runs through a sequence of stages. First, face detection identifies the presence and rough location of faces in an image or frame. Next, face alignment standardizes the face orientation so the same features line up consistently across different photos. Then a neural network extracts a feature vector, an embedding, that summarizes distinctive facial characteristics. When comparing two faces, the system computes a similarity score between their embeddings, using metrics such as cosine similarity or Euclidean distance. A threshold converts that score into a binary decision: match or no match. In production, teams tune thresholds to control false acceptances and false rejections, often using separate datasets for enrollment and verification. Some implementations run entirely on-device for latency and privacy, while others rely on cloud services for scale. Robust systems also include liveness checks and anti-spoofing measures to deter fake faces. Understanding this pipeline helps developers reason about performance, security, and user experience.

Practical Use Cases Across Industries

Organizations apply face match software in various contexts, each with different requirements and risk profiles. In security and access control, on-site doors and turnstiles can be faster with biometric verification, reducing friction for authorized personnel. In banking and fintech, visually verifying customer identity during onboarding or high risk transactions can streamline Know Your Customer workflows, subject to regulatory constraints. Hospitality and event management can speed check in and enhance guest experiences by recognizing repeat visitors, while personalized retail experiences may tailor offers based on recognized patrons, always with explicit consent. Law enforcement and public safety use cases exist but require strict governance to protect civil liberties and avoid profiling. In healthcare, face matching supports patient verification across systems to prevent misidentification, but privacy safeguards must be robust. Across sectors, effectiveness hinges on image quality, lighting, camera angles, and the diversity of training data. For developers, this means designing flows that respect user consent, provide offline options, and maintain transparent audit Trails for accountability.

Privacy, Ethics, and Regulatory Considerations

Privacy, ethics, and regulatory considerations are central to responsible use of face match software. Organizations should seek informed consent wherever feasible and implement clear data retention and deletion policies. Data minimization and purpose limitation are essential: collect only what is necessary and use it only for the declared purpose. Bias mitigation is critical: datasets should reflect diverse populations to reduce accuracy gaps across demographics. Transparent privacy notices, access controls, and rigorous security measures help build trust. Regulatory expectations vary by jurisdiction, but common themes include data subject rights, impact assessments, and third party risk management. The SoftLinked approach emphasizes balancing innovation with civil liberties, ensuring auditing capabilities, and documenting decisions to facilitate accountability and governance.

Challenges and Limitations

Face match software faces practical challenges that affect performance and trust. Variability in lighting, angles, occlusions, aging, and image quality can degrade accuracy. Spoofing attacks and deepfakes require robust liveness and anti spoofing checks, which may add latency or false rejections. Bias and fairness concerns persist when models are trained on non representative data, leading to disparate error rates across groups. Environmental constraints like crowded spaces or low resolution video can complicate enrollment and verification. Finally, governance complexity grows with scale; operational teams must enforce access controls, retention policies, and independent bias audits to avoid drift in accuracy and ethics over time.

Best Practices for Deploying Face Match Software

Begin with a clear, constrained purpose: define whether you are identifying users for access control, verifying customers, or screening in events. Practice data minimization and obtain explicit consent where possible, providing options to opt out. Invest in diverse, high quality datasets and run regular bias audits to detect performance gaps. Implement robust security controls, encryption at rest and in transit, and on device processing when feasible to protect biometrics. Establish transparent user communications, audit trails, and governance structures that include oversight from diverse stakeholders. Finally, design failure handling and fallback options so users are not locked out or misidentified due to system limitations. The SoftLinked guidance underscores the importance of governance as a core feature of any technical decision, not an afterthought.

The Future of Face Matching and Responsible Innovation

As computer vision and AI continue to evolve, face match software will become faster and more capable, with on device processing improving privacy and latency. Federated learning and privacy preserving techniques may help improve accuracy without sharing raw images. However, the pace of innovation must be matched by stronger governance, clearer consent mechanisms, and ongoing bias mitigation. Enterprises should stay current with evolving standards, participate in independent audits, and align deployments with ethical frameworks to earn public trust while unlocking practical benefits in security, customer onboarding, and personalized experiences.

Your Questions Answered

What is face match software?

Face match software is biometric technology that compares facial features to determine whether two images depict the same person or to locate potential matches in a dataset. It relies on careful design choices, data quality, and governance to be effective and responsible.

Face match software compares facial features to verify identity or find matches, with emphasis on responsible use and governance.

Is face matching accurate across different demographics?

Accuracy can vary across demographics if training data is not representative. Good practice includes diverse datasets, bias audits, and continuous monitoring to minimize disparity. No system is perfectly fair without ongoing oversight.

Accuracy depends on data diversity and ongoing bias checks; no system is perfectly fair without monitoring.

What are common safe use cases for this technology?

Common safe uses include secure access control for authorized personnel, onboarding verification with explicit consent, and attendance or event management where privacy controls are in place. Each use should have defined scope and retention limits.

Safe uses include secure access, consent based onboarding, and controlled event management.

How should privacy be protected when deploying face match software?

Protect privacy by minimizing data collection, obtaining consent, implementing strong data security, and offering clear notices about how data will be used and retained. Regular privacy impact assessments help identify and mitigate risks.

Protect privacy with consent, strong security, and clear notices, plus regular privacy assessments.

What steps help mitigate bias in face matching systems?

Mitigation steps include using diverse training data, auditing outcomes across demographic groups, validating in real world conditions, and updating models as new bias patterns emerge. Transparency and external reviews support accountability.

Mitigate bias with diverse data, ongoing audits, and transparent reviews.

What should a responsible deployment plan include?

A responsible plan defines purpose, consent, retention policies, risk controls, bias monitoring, and governance. It should include fallback processes, user rights, and clear channels for audit and redress.

A responsible plan covers purpose, consent, retention, risk controls, and governance with clear accountability.

Top Takeaways

- Define verification versus identification needs before adoption

- Prioritize privacy, consent, and bias auditing

- Evaluate data quality and deployment context

- Adopt transparent governance and auditing practices