Can Software Cause Hardware Failure: Mechanisms, Risks, and Mitigation

Explore how software can influence hardware health, including drivers, firmware, and control software. Learn symptoms, risk scenarios, and practical mitigation strategies to reduce software-induced hardware failures. A SoftLinked guide for developers and engineers seeking clarity on software hardware interactions.

Can software cause hardware failure is a software-induced hardware risk where faults in software drive hardware into unsafe states, stress components, or degrade performance, potentially leading to damage.

What we mean by can software cause hardware failure

When people ask can software cause hardware failure, they are asking whether software faults can push physical components into unsafe operating conditions or accelerate wear. The short answer is yes, but typically in an indirect way. Software can influence hardware through drivers, firmware, and control logic. A misbehaving driver might drive a motor beyond safe limits, a firmware bug could unlock unsafe states in a sensor array, or a software loop could schedule tasks in a way that causes components to run hot or stress beyond their design margins. Importantly, this is a software reliability and safety topic as much as a hardware one. The goal is to understand how to reduce risk so that software does not cause hardware to fail or degrade faster than intended.

In this article we will use can software cause hardware failure as the framing device to discuss mechanisms, risk factors, and mitigations. We will also discuss how to design for resilience so that software systems remain safe even when individual components behave unexpectedly. The emphasis is on practical, testable guidance that applies across embedded systems, consumer electronics, and enterprise infrastructure. The SoftLinked team highlights that rigorous testing and monitoring are essential to minimize software-induced hardware risk.

How software can indirectly damage hardware

Software rarely causes direct physical damage by itself, but it can create conditions that stress hardware, shorten its lifespan, or trigger failures. Common pathways include overheating due to software-controlled duty cycles or fan management that misreads temperature data, drive controllers issuing unsafe command sequences, and power management bugs that fail to respect voltage rails. In some cases, software faults lead to resource exhaustion that keeps components in high-load states longer than anticipated, accelerating wear. Another channel is safety-critical systems where software controls actuators; if the software misinterprets sensor data, it can cause hardware to operate outside safe parameters. Finally, malware or compromised software can bypass safeguards, enable unsafe configurations, or disable monitoring, making hardware more vulnerable to damage. Understanding these pathways helps engineers design better containment and monitoring around software–hardware interactions.

To keep can software cause hardware failure from remaining a theoretical concern, teams should implement strict validation of software in the context of hardware interfaces, use fail-safe defaults, and ensure that critical hardware paths have independent monitoring and watchdogs. Emphasizing defense in depth between software layers and hardware components reduces the chance that a software fault translates into hardware stress or damage.

Mechanisms: drivers, firmware, and control loops

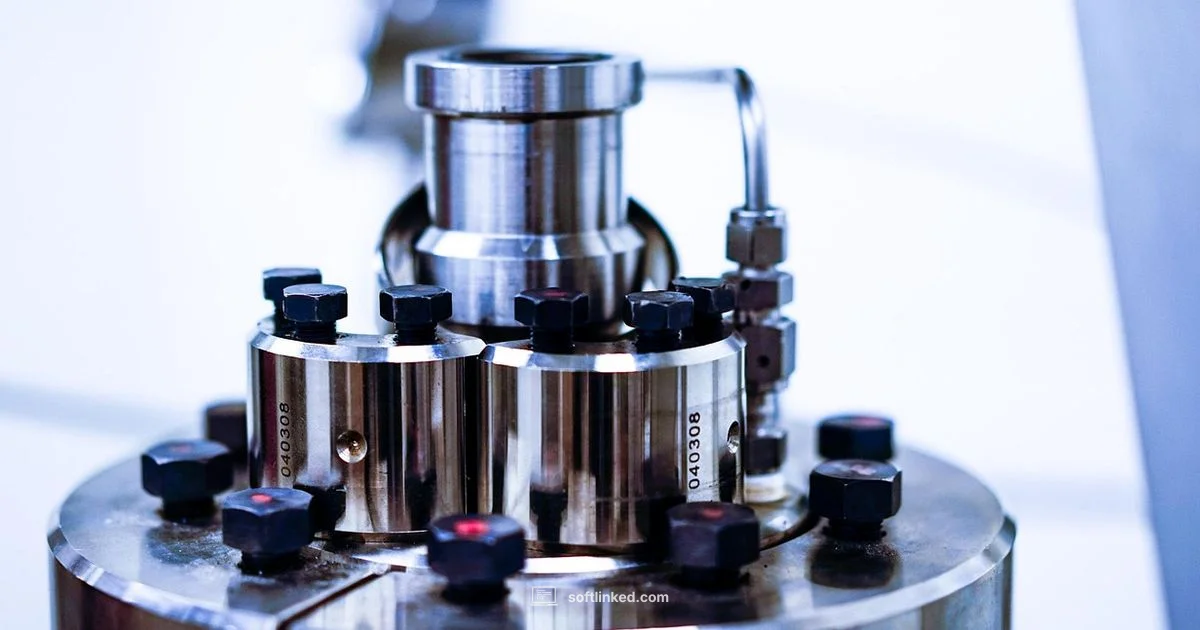

Drivers translate software requests into hardware actions. A bug in a driver can produce incorrect voltage levels, out-of-range PWM signals, or spurious interrupts that push hardware beyond safe operating margins. Firmware sits closer to the hardware and can misbehave during updates or after fault recovery, potentially leaving devices in inconsistent, unsafe states. Control loops in software that regulate temperature, motor speed, or power delivery rely on accurate sensor data; if sensor input is degraded or misread, the loop can drive outputs that heat components, wear mechanical parts, or create resonance that harms circuitry.

In embedded systems and IoT, where software tightly coordinates hardware, even small errors can cascade. For example, an overaggressive cooling strategy driven by faulty temperature readings can overcompensate, affecting humidity thresholds or fan wear. In security terms, compromised software can disable monitoring or alter safety limits, making hardware more susceptible to damage during normal operation. The takeaway is that drivers, firmware, and real-time control software are common chokepoints where can software cause hardware failure becomes most plausible.

Real-world failure modes you might observe

Can software cause hardware failure to manifest in observable ways? Yes, through a spectrum of symptoms. You might see unexpected reboots or lockups that occur under heavy load, subtle performance degradation that correlates with software updates, or alarms triggered by hardware sensors that are read incorrectly. Overheating, degraded battery health in devices, or abnormal actuator behavior (for example, jerky motor movement) can indicate software-driven stress on hardware. Firmware bricking after an update is another clear sign that can software cause hardware failure has occurred in the update pipeline. In some cases, the issue might be intermittent, appearing only under specific timing conditions or rare sensor readings, making it challenging to reproduce without precise instrumentation. Identifying whether software is contributing to such outcomes requires careful correlation of software changes with hardware telemetry and event logs.

Risk factors and scenarios

Several factors increase the likelihood that can software cause hardware failure becomes a real concern. Complex software stacks with multiple layers of drivers and firmware create more surface area for bugs to slip through. Real-time or near real-time systems with tight timing constraints are especially sensitive to software misbehavior. In embedded devices, improper power management logic or aggressive aggressive duty cycling can cause rapid thermal changes. Malware or compromised software that disables monitoring and safety checks poses a direct risk to hardware. Environment also matters; poor cooling, dusty devices, or fluctuating power supply conditions can amplify software-induced stress. Finally, hurried updates, insufficient rollback plans, and inadequate test coverage for hardware interfaces heighten risk. The overarching message is to treat software–hardware interfaces as critical fault points that deserve careful design and verification.

Mitigation strategies for developers and operators

Mitigation starts with rigorous testing at the software–hardware boundary. Use hardware-in-the-loop testing, which pairs software simulations with real hardware to observe how the system behaves under realistic loads. Implement defensive coding practices, such as validating sensor data, applying rate limits on control signals, and implementing safe timeouts for hardware operations. Build robust monitoring that traces software state, hardware telemetry, and environmental conditions; set up alerts for anomalies that could indicate a fault path leading to hardware stress. Employ fail-safe defaults that bring the system to a safe resting state if sensors fail or communications are degraded. Versioning and staged rollouts for firmware and drivers reduce the chance that a new update introduces unsafe configurations. Regularly audit your safety margins and test for edge cases, including stress tests and fault-injection scenarios.

For teams working with safety-critical systems, implement formal hazard analysis and define quantifiable safety requirements tied to hardware tolerances. Design software with safety by design principles: conservative defaults, redundancy in critical paths, and independent hardware watchdogs to catch software faults before they translate into hardware action. Documentation and reproducibility matter, too; keeping a clear record of changes, test results, and rollback plans helps teams respond quickly when issues arise.

Design for resilience and safety

Resilience begins with clear separation of concerns between software layers and hardware components. Use modular architectures that limit the scope of each component and provide explicit interfaces with bounded inputs and outputs. Incorporate redundancy for critical sensors and actuators, along with diversified signaling paths to reduce single points of failure. Implement hardware fault containment, such as error detection codes, parity, and checksums, to help identify data corruption early. Build monitoring dashboards that correlate software health with hardware telemetry, enabling proactive maintenance before failures occur. When safety is a priority, adopt formal methods or model-based design to verify that software behavior remains within safe operating envelopes under a broad range of conditions. Finally, cultivate a culture of safety testing, including regular disaster recovery drills and simulated fault scenarios, to keep teams prepared for can software cause hardware failure in production.

Investigating suspected software induced hardware faults

If you suspect that software is contributing to hardware faults, begin with a careful replication strategy. Reproduce the fault with the same hardware and firmware versions, and capture detailed logs from software, drivers, and the hardware layers. Instrument telemetry to seek correlations between software events and hardware anomalies. Try isolating software by running with known-good configurations or on hardware in a test bench to determine if the issue persists. Use controlled experiments to rule out external factors like environmental conditions or power quality. If a fault is confirmed, implement a rollback path for the problematic software and run targeted tests on the updated components before redeploying. The investigation should be methodical, reproducible, and documented so teams can learn and prevent recurrence.

Authority Sources and reading list

For readers who want authoritative context on software reliability and hardware interaction, consult trusted sources:

- National Institute of Standards and Technology (NIST): Software topics and system reliability guidance, https://www.nist.gov/topics/software

- IEEE standards and materials on software and hardware interfaces, https://www.ieee.org

- University-level materials on software reliability and safety engineering, https://www.stanford.edu

],

Your Questions Answered

Can software damage hardware?

Software can cause hardware to fail indirectly by driving components beyond safe limits, mismanaging power or thermal controls, or disabling safety features. Direct physical damage is rare and typically results from extreme fault conditions or compromised software. Proper design and testing reduce these risks.

Software can damage hardware indirectly by driving components beyond safe limits or disabling safeguards. Direct damage is rare, but thorough testing helps prevent it.

Signs of software faults affecting hardware?

Look for unpredictable reboots, overheating events, degraded performance, sensor anomalies, or abnormal actuator behavior. Correlate these with software changes, driver updates, and firmware versions to determine if software is contributing to hardware faults.

Watch for sudden reboots, heat spikes, or odd sensor readings that align with software changes.

Are firmware updates risky for hardware health?

Firmware updates can introduce bugs or leave devices in unsafe states if not properly tested. Always follow vendor guidance, verify compatibility, and have rollback plans before updating firmware on critical hardware.

Firmware updates can be risky if not tested; verify compatibility and have a rollback plan.

How can I prevent software-induced hardware failures?

Adopt defensive programming, robust testing, and hardware-in-the-loop simulations. Use monitoring, safe defaults, and watchdogs to detect and stop unsafe behavior before it harms hardware.

Use defensive coding, testing, and monitoring to stop unsafe software from harming hardware.

Is malware a risk to hardware health?

Malware can disable monitoring, bypass safety checks, or alter configurations, increasing the chance of hardware stress or damage. Protect software supply chains and monitor for anomalous activity to mitigate this risk.

Malware can push hardware into unsafe states; protect software supply chains and monitor activity.

How do I diagnose software hardware issues?

Start by reproducing the fault in a controlled setting, collect logs, and monitor both software state and hardware telemetry. Isolate software from hardware to pinpoint the root cause, then validate fixes with repeatable tests.

Reproduce the fault, gather logs, and isolate software from hardware to find the root cause.

Top Takeaways

- Test software with hardware in mind to catch can software cause hardware failure early

- Implement defensive coding to validate inputs from sensors and hardware controllers

- Use hardware-in-the-loop and safety-focused testing pipelines

- Design with failsafes and watchdogs to prevent unsafe hardware states

- Monitor software and hardware telemetry together to identify risk patterns